Most AI failures in companies aren’t because the model is dumb. They happen because the company’s knowledge is messy, scattered, and outdated. If your data is chaos, your AI will confidently give you wrong answers. The fix is not a better model. It’s a structured, governed knowledge base that AI can actually understand and trust.

Claude Code and similar AI coding tools genuinely make engineers faster, but speed alone doesn't guarantee better outcomes. The real variable is whether your system can absorb an increased rate of change. The same underlying problem shows up differently depending on who you are: technical leaders see loss of system coherence, business leaders see loss of delivery predictability. Most teams try to fix this with more tooling, better prompts, or better models, when what's actually missing is a governance layer that controls how changes enter the system.

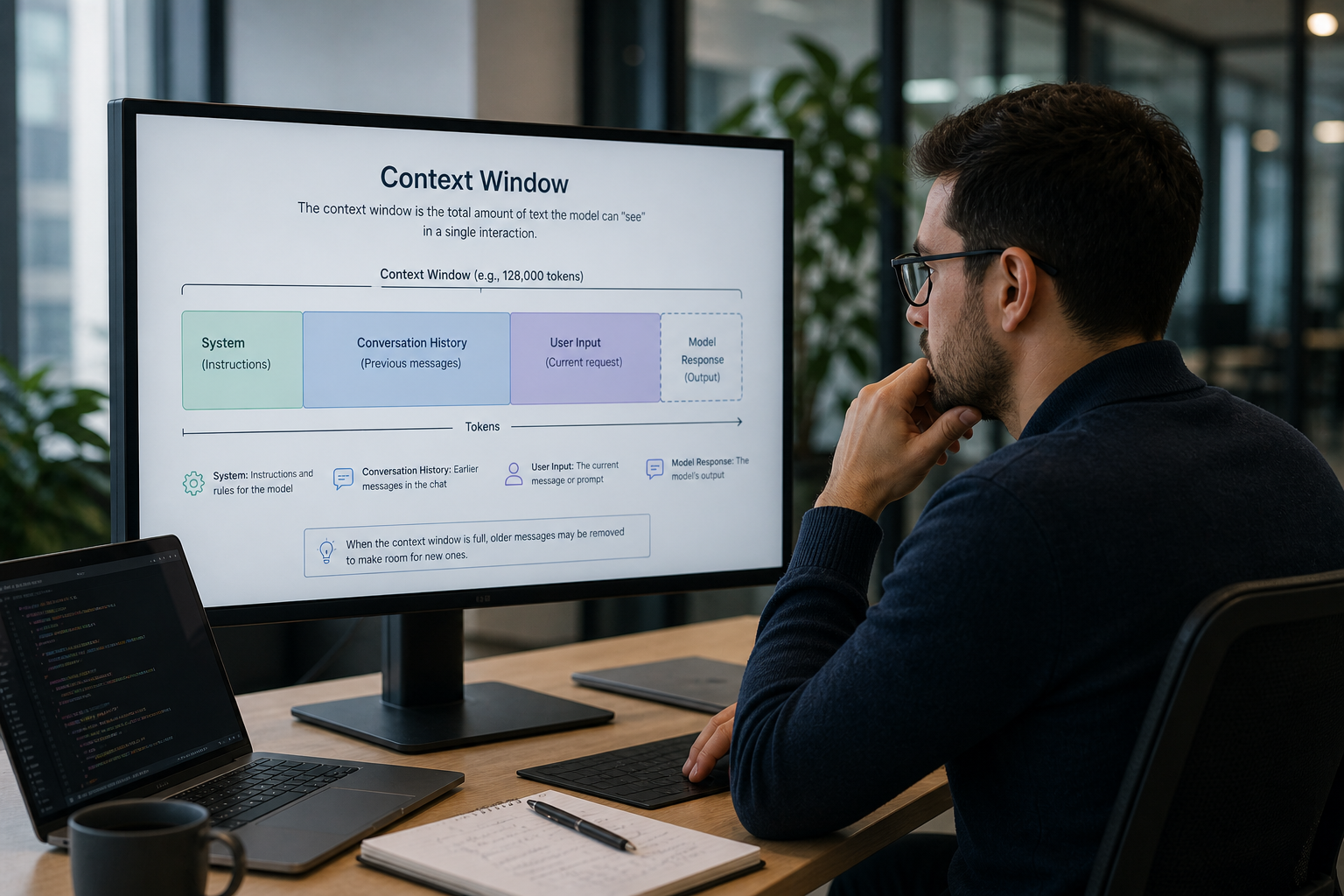

Enterprise codebases will never fit cleanly into an AI context window, and the limits are structural rather than engineering problems waiting on the next model release. Scaling AI coding agents at system level requires a structured, continuously maintained representation of the system itself, exposed to the model as a queryable map and governed by lifecycle gates that prevent "almost-right" code from quietly breaking production.

When a senior engineer leaves, the context they carried walks out with them. AI tools operating on codebases without that context make dangerous assumptions. This article breaks down why system understanding is the prerequisite for AI-assisted development, and what technical leaders should do before pointing any AI tool at their code.

When AI handles implementation, the PM role shifts from requester to governor. Requirements become the product, feedback loops compress from sprints to days, and acceptance criteria become functional specifications. This article breaks down what changes, what the role looks like in practice, and five concrete steps PMs can take now to prepare.

AI has dramatically accelerated how code is written, but it hasn’t changed how software is actually built. This mismatch is creating new bottlenecks, increasing hidden complexity, and making systems harder to trust. The next evolution isn’t better coding tools, it’s a fully structured, AI-driven lifecycle that governs how software is designed, validated, and continuously evolved.

I hallucinations in legacy systems are not a technology problem. They are a context problem. When a coding assistant breaks your database sharding logic or ignores a legacy authentication wrapper, it hasn't failed; it has simply made the most statistically likely guess in the absence of specific facts.

AI has transformed how fast software gets written. But speed at the commit layer doesn't equal velocity at the system level. This post explores why unchecked AI adoption is creating a Review Crisis across engineering organizations and what executive teams need to do to govern change intelligently before the complexity tax compounds beyond recovery.

Most organisations are buried in admin that adds little value. Traditional automation failed because real work needs context, judgement, and coordination across tools. AI agents can now handle this, but only if teams are AI native. Most AI pilots fail due to lack of understanding, not bad tech. This blog explains CloudGeometry’s five step framework (Brief, Tooling, Sprint, POC, Adoption) which cut admin by 63% and recovered 40+ hours a week.

Most AI initiatives stall not because models underperform, but because organizations fail to decide how AI behavior will be evaluated, governed, corrected, and explained. This article outlines the four foundational decisions every team must make before AI starts making decisions on their behalf, and why skipping them quietly breaks AI strategies long before anything ships.

Starting AI projects by picking tools feels like progress, but it often hard-codes architectural decisions before teams understand their risks. This article explains why tools should come last, and how treating them as replaceable implementations leads to more resilient, future-proof AI systems.

AI agents are powerful—but often overused. This piece explains the real architectural differences between single-step AI, workflows, and agents, and shows why most production systems don’t need autonomy to succeed.

The AI tooling landscape feels overwhelming because teams start with products instead of decisions. This article reframes AI tools as implementations of specific choices about control, autonomy, data, and evaluation, and shows how clarifying those decisions first makes tool selection simpler, safer, and more durable.

In 2026, AI spending scrutiny will rise. This guide helps organizations plan AI investments that survive CFO review, avoid pilot purgatory, and deliver compounding ROI through clear outcomes, defined metrics, and scalable foundations.

Get a Custom Course for your management team, to get the latest update on the stage of AI in your industry.